1 Introduction and Related Works

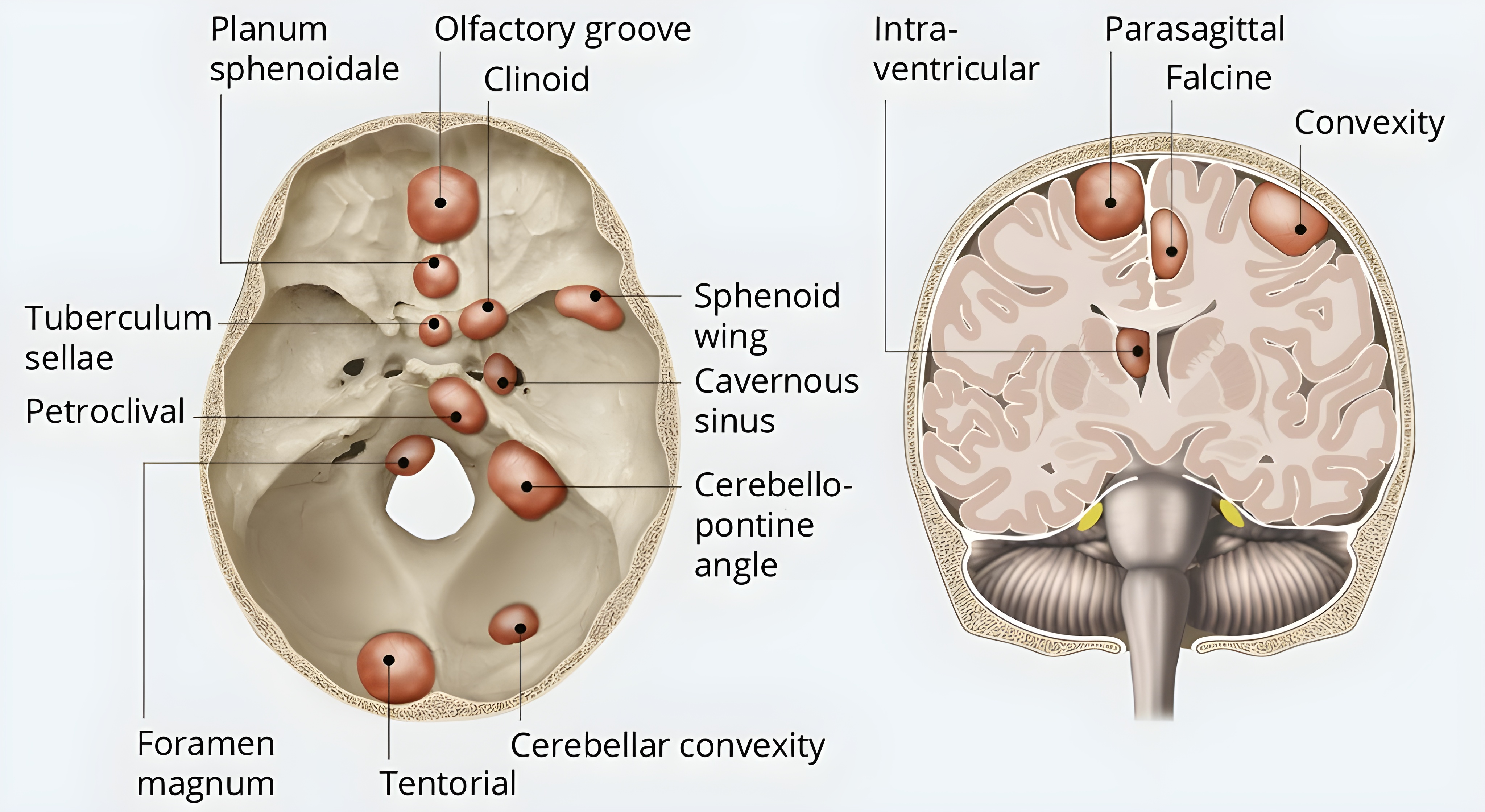

Meningiomas are the most common primary brain tumors, and several common treatment modalities, including surgical resection and radiation therapy, require accurate delineation of tumor components (Ogasawara et al., 2021; Rogers et al., 2017, 2020). When used clinically, meningioma MRI segmentation is often performed using T1-weighted (T1), T2-weighted (T2), T2-weighted-Fluid-Attenuated Inversion Recovery (FLAIR), and T1 post-contrast (T1Gd) multi-sequence brain magnetic resonance image (MRI) (Martz et al., 2022). Meningioma segmentation on brain MRI can be challenging due to the diverse morphology and location of meningiomas within the brain. Anatomically, meningiomas arise from the arachnoid layer of the meninges between the dura mater and pia mater and commonly present at supratentorial sites of dural reflection, along the sphenoid sinus, and the skull base. Less commonly, meningiomas occur in intraventricular and suprasellar regions, the olfactory groove, and in the posterior fossa along the petrous bone (LaBella et al., 2023). Examples of common anatomical locations of meningioma are depicted in Figure 1, which is an unmodified figure by Murek (Murek, 2024). Their extra-axial location can frequently lead to their exclusion, in whole or in part, from brain MRI skull-stripping pre-processing steps. Radiographically, meningioma can present with a wide range of presentations which contributes to the difficulty in creating accurate generalizable meningioma automated segmentation models (Watts et al., 2014). Commonly encountered radiographical variants and findings include en plaque meningioma, which is a plaque-like sessile extension of tumor along the meninges, cystic meningioma components, dural tail involvement extension, peri-tumoral edema, and numerous distinct lesions (Watts et al., 2014).

Recent advancements in segmentation techniques for brain tumors, particularly gliomas, through the application of deep learning and convolutional neural networks (CNNs), have shown promise in overcoming these challenges, offering increased accuracy and reproducibility compared to traditional methods (Pereira et al., 2016; Havaei et al., 2017; Bouget et al., 2022). Since the inception of the Brain Tumor Segmentation (BraTS) challenge in 2012, they have been instrumental in propelling forward the field of brain tumor imaging segmentation by providing comprehensive datasets that facilitate the development and benchmarking of segmentation algorithms (Menze et al., 2014; Bakas et al., 2017). The inaugural 2012 challenge had 35 training cases and 15 test cases focused solely on glioma, and the glioma dataset most recently increased to over 2000 cases included in the 2023 challenge. Studies by Menze et al. and Bakas et al. underscore the importance of the BraTS dataset in improving the segmentation accuracy for gliomas, leveraging multi-sequence MR images to improve the delineation of tumor tissues from non-tumorous brain matter (Menze et al., 2014; Bakas et al., 2017; Gordillo et al., 2013; Işın et al., 2016).

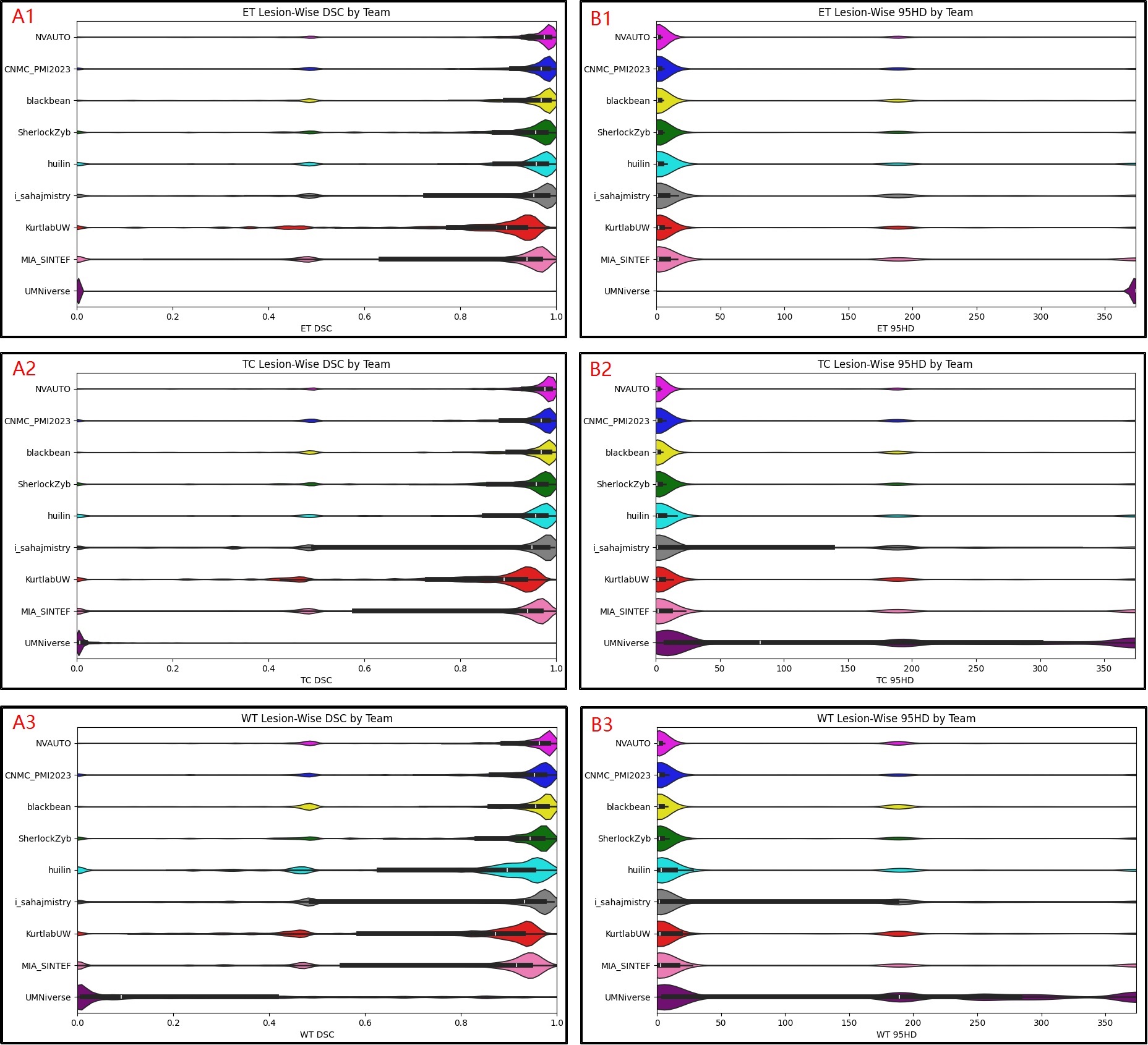

In 2023, the BraTS organizing committee hosted new automated segmentation challenges to additionally focus on pediatric tumors, gliomas diagnosed in sub-Saharan Africa, brain metastasis, and meningioma (bra, 2023; LaBella et al., 2023; Baid et al., 2021; Kazerooni et al., 2023; Moawad et al., 2023). Building on the foundation of prior BraTS challenges, the BraTS 2023 Intracranial Meningioma Segmentation Challenge aims to establish a community standard and benchmark for intracranial meningioma segmentation (Bakas et al., 2017; LaBella et al., 2023; Calabrese and LaBella, 2023). We present a comprehensive analysis of segmentation performance across nine teams participating in the challenge focusing on key metrics: Enhancing tumor (ET) dice similarity coefficient (DSC), tumor core (TC) DSC, and whole tumor (WT) DSC, ET 95% Hausdorff Distance (95HD), TC 95HD, and WT 95HD. These metrics were evaluated on a lesion-wise basis to account for the possibility of multiple lesions. In many clinical scenarios, particularly in diseases such as meningioma where patients may present with multiple lesions of varying sizes, global metrics tend to average performance over the entire image volume. This averaging can mask suboptimal performance on smaller or less conspicuous lesions. By contrast, lesion‐wise evaluation allows each individual lesion to be assessed separately, thereby providing a more nuanced picture of an algorithm’s performance. For instance, a segmentation algorithm might achieve a high overall DSC by accurately segmenting larger lesions while missing or poorly delineating smaller ones. Evaluating the DSC and 95HD on a lesion-by-lesion basis highlights such discrepancies, which is particularly important for clinical decision-making where even a single missed lesion could be significant. Advantages and disadvantages of lesion-wise metrics are listed below.

Advantages of lesion-wise metrics:

- •

Granular Assessment: Evaluates each lesion individually, revealing performance variability hidden in global metrics.

- •

Clinical Relevance: Aligns with clinical needs by ensuring even small, critical lesions are accurately segmented.

- •

Error Localization: Identifies specific algorithm weaknesses on a per-lesion basis.

Disadvantages of lesion-wise metrics:

- •

Noise Sensitivity: Small errors in tiny lesions can disproportionately impact metric values.

- •

Definition Ambiguity: Variability in defining individual lesions (especially confluent ones) may lead to inconsistent evaluations.

By evaluating each of the competing teams’ automated segmentation algorithms’ performance using lesion-wise metrics, we can identify state-of-the-art machine learning algorithm techniques. By doing so, we anticipate extension beyond the technical realm, to impacting patient outcomes, surgical approaches, radiation therapy planning, and understanding tumor behavior such as the propensity for an extra-axial location. As such, this study contributes to the technical field of medical imaging analysis and to the broader understanding of meningioma treatment and management strategies.

2 Methods

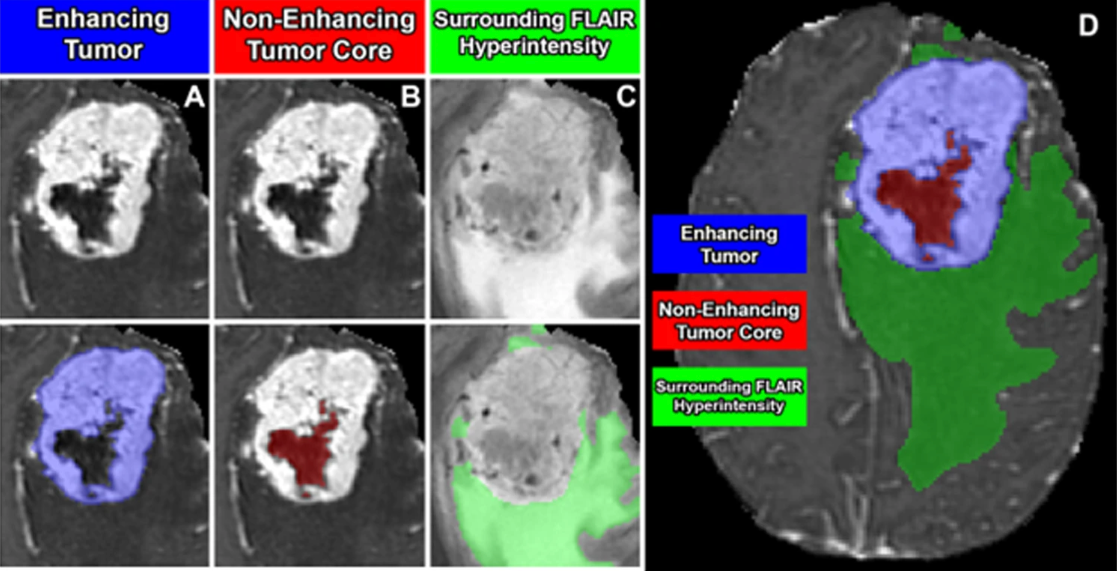

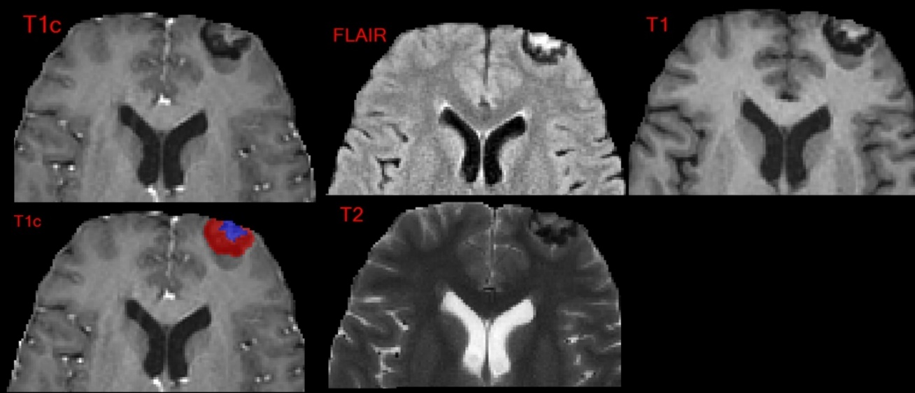

2.1 Challenge Data

BraTS Meningioma Challenge image data was contributed from 6 different United States academic medical centers: Duke University, Yale University, Thomas Jefferson University, University of California San Francisco, Missouri University, and University of Pennsylvania. Image data consisted of T1, T2, FLAIR, and T1Gd brain MRI sequences from patients with radiographic or pathologic diagnosis of intracranial meningioma. All data preprocessing was conducted using the FeTS tool, (Pati et al., 2022) and included conversion to NIfTI file format, co-registration, isotropic resampling to the SRI24 atlas space, and automated skull stripping (Schwarz et al., 2019; Thakur et al., 2019, 2020; Juluru et al., 2020; Smith, 2002). The skull stripping algorithm, part of the FeTS preprocessing workflow, was integral in removing non-brain tissue, including the skull and scalp, to isolate the intracranial structures. The brain extraction tool, widely used in neuroimaging pipelines, relies on deformable models and intensity-based thresholding to separate the brain from surrounding tissues (Smith, 2002). In this challenge, skull stripping was critical for preserving patient anonymity by preventing potential face reconstruction from MRI data and to standardize data preparation across institutions. However, it should be noted that meningiomas often extend through the skull and skull-base foramina, and any extra-cranial portions of the tumors were implicitly excluded by this process (Smith, 2002). Despite this limitation, skull stripping was applied to ensure consistency with other BraTS 2023 challenges and to minimize the inclusion of non-brain tissue. After image data pre-processing, tumor compartment labels were created using a comprehensive pre-segmentation, manual correction, and expert revision process, including enhancing tumor, non-enhancing tumor, and surrounding non-enhancing FLAIR hyperintensity (SNFH) as seen in Figure 2, which is an unmodified figure by LaBella et al. (LaBella et al., 2023, 2024). The initial pre-segmentation was performed using a deep convolutional neural network-based model (Isensee et al., 2021). Subsequently, 39 annotators manually reviewed and refined the segmentations. These annotators had varying levels of experience, from medical stu1dents to fellowship-trained neuroradiologists. Each annotator was given instructions on how to use the ITKSnap annotation software (Yushkevich et al., 2006) as well as a document on common errors of meningioma pre-segmentation as discussed in the dataset resource paper by LaBella et al (LaBella et al., 2024). After each annotator completed their manual corrections, the labels were sent to a final board-certified fellowship trained neuroradiologist (EC) for approval. This multi-step approach ensured the accuracy and consistency of the segmentation labels, incorporating multiple rounds of revision as needed to achieve high-quality final segmentations. All participating institutions received Institutional Review Board and Data Transfer Agreement approvals before contributing data, ensuring compliance with relevant regulatory authorities.

2.2 Challenge Procedures and Timeline

The BraTS 2023 Intracranial Meningioma Segmentation Challenge was hosted on the Synapse platform using the BraTS Pre-operative Meningioma Dataset (bra, 2023). To access the challenge dataset and to be eligible for submission of automated segmentation models, participants were required to register as a participating team on the Synapse platform. Registered teams developed automated segmentation algorithms that trained on multi-sequence MRI of pre-treatment intracranial meningioma with associated ground truth labels that were released to the participating teams in May 2023.

In June 2023, each of the participating teams had access to additional validation data consisting of multi-sequence MRI cases. For validation data, teams were able to assess segmentation performance of their models by submitting predicted labels through the Synapse platform, but individual ground truth segmentations were not made publicly available. From July until August 2023, participating teams utilized the validation dataset to fine tune their segmentation models and compose short paper manuscripts. At the end of the validation phase, each participating team uploaded their optimal automated segmentation model and respective manuscript as an MLCube container, which was used for evaluation in the testing phase. During the testing phase, the BraTS organizing committee internally evaluated each of the participating team’s automated segmentation models on the hidden test set of pre-operative meningioma cases with ground truth labels.

2.3 Algorithm Evaluation

During the testing phase, the BraTS organizing committee evaluated metrics on three regions of interest including ET, TC, and WT. ET was solely the enhancing tumor compartment label. TC was the combination of enhancing tumor and non-enhancing tumor compartment labels. WT was the combination of enhancing tumor, non-enhancing tumor, and SNFH compartment labels. Note that the term “whole tumor” was used across the BraTS 2023 cluster of challenges for consistency; however, this term is not entirely accurate for meningioma, where SNFH typically does not contain any tumor but rather represents associated vasogenic edema. Metrics used for evaluation included DSC and the 95HD and were evaluated on a lesion-wise level. The DSC is a measure used to quantify the similarity between two samples, which, in this context, refers to the overlap between automated segmentation and the expert annotated ground truth labels for each respective tumor compartment. The 95HD observed in the segmentation results. The 95HD was used in lieu of the standard 100% Hausdorff Distance to account for smaller lesions that may suffer from overestimates of the standard 100% Hausdorff Distance. For previous BraTS challenges, a global DSC was used for challenge rankings. However, lesion-wise metrics were adopted for the 2023 challenge as there was greater potential for multiple distinct lesions in a single patient image (most notably for the metastasis and meningioma sub-challenges). Distinct lesions were identified by performing a 1 voxel symmetric dilation on the ground truth WT masks, and then evaluating a 26-connectivity 3D connected component analysis to determine if overlap between distinct lesions exists (Rudie, 2023). A case’s lesion-wise DSC and 95HD scores are calculated based on equations (1) and (2) respectively, where L is the number of ground truth lesions and (true positive (TP) + false negative (FN)) is equal to L (Saluja, 2023). A predicted lesion is counted as a TP if at least 1 predicted voxel overlaps with the respective ground truth’s respective region of interest mask. A lesion is counted as a FN if the model does not predict any voxels within the ground truth’s respective region of interest mask. A predicted lesion is counted as a false positive (FP) if the model predicts a distinct lesion that does not overlap with any ground truth lesions’ voxels. The lesion-wise scoring system assigned a specific lesion’s region of interest a DSC score of 0 and a 95HD score of 374 for FP or FN. These equations effectively calculate the average DSC or 95HD values across all of the predicted lesions for a given case. The scoring system also excluded evaluation for ground truth lesions smaller than 50 voxels to avoid evaluation of false ground truth lesions missed in dataset review. This threshold was discussed and decided by fellowship trained neuroradiologists after ground truth label review (Saluja, 2023; Rudie, 2023). Evaluation of submissions was performed on MLCommons’ MedPerf, an open federated AI/ML evaluation platform (Karargyris et al., 2023). MedPerf automated the evaluating pipeline by running the participants’ models on the testing datasets of each contributing site’s data and calculating evaluation metrics on the resulting predictions. Finally, the Synapse platform retrieved the metrics results from the MedPerf server and ranked them to determine the winner (MLCommons Association, 2024; Pati et al., 2023; Karargyris et al., 2023).

| (1) |

| (2) |

2.4 Participant Ranking and Workshop Proceedings

The BraTS organizing committee internally evaluated each of the participating team’s automated segmentation models on the hidden test set of pre-operative meningioma cases to determine lesion-wise metrics for both DSC and 95HD for each of the three regions of interest. The participants were ranked against each other for each region of interest’s lesion-wise metric independently. A total of 6 independent rankings were calculated to reflect the two metrics, DSC and 95HD, for each of the ET, TC, and WT regions of interest. Then a “BraTS segmentation score” was calculated based on the average of each independent lesion-wise region of interest metric rankings. For example, if a team had the 3rd best ET DSC, 2nd best TC DSC, 3rd best WT DSC, 3rd best ET 95HD, 2nd best TC 95HD, and 4th best WT 95HD, then that team would have an average ranking of (3+2+3+3+2+4) / 6 = 2.83 as their BraTS segmentation score. The BraTS segmentation score was used to determine the final participant rankings relative to one another. The three top-ranked teams were invited to present their findings at the BraTS workshop at the 2023 MICCAI Annual Meeting held in Vancouver, Canada; although final rank was hidden until the workshop. At the BraTS workshop, the BraTS organizing committee announced the final placement of the three top-ranked teams. Monetary awards of $1400, $1000, and $800 were presented to the three top-ranked teams, respectively.

2.5 Challenge Results Analysis

Overall participant and individual team statistical analysis of DSC and 95HD lesion-wise performance was performed using Python and Microsoft Excel (Excel). Analysis included calculation of participant average, standard deviation, and median DSC and 95HD for each region of interest; overall average and median DSC and 95HD across all participants for each region of interest; volume calculations of each lesion; and number of abutting voxels of lesions compared to the pre-processed brain MRI.

2.6 Analysis of Tumor Abutment of Brain Masks

Given the extra-axial location of meningiomas, we sought to evaluate the proportion of meningiomas that were potentially cropped or excluded by the automated skull stripping process. To determine the volume of each compartment label and the number of tumor compartment voxels that were directly abutting the edge of the skull-stripped images for each case, the NumberOfEdgeNeighbors.py script was internally run by BraTS organizers, which evaluated each of the 1483 meningioma MRI cases (LaBella, 2024). This analysis was performed internally due to restricted access to the hidden test dataset. To determine significance of association of WT volume compared to abutting voxels, the Pearson Correlation Coefficient was calculated with associated p-value with a significance level of 0.05.

3 Results

A total of 1000 training (70%) multi-sequence pre-operative meningioma MRI cases, 141 validation cases (10%), and 283 test cases (20%) were utilized within the BraTS Meningioma Challenge (Table 1) in adherence with standard machine learning protocols.

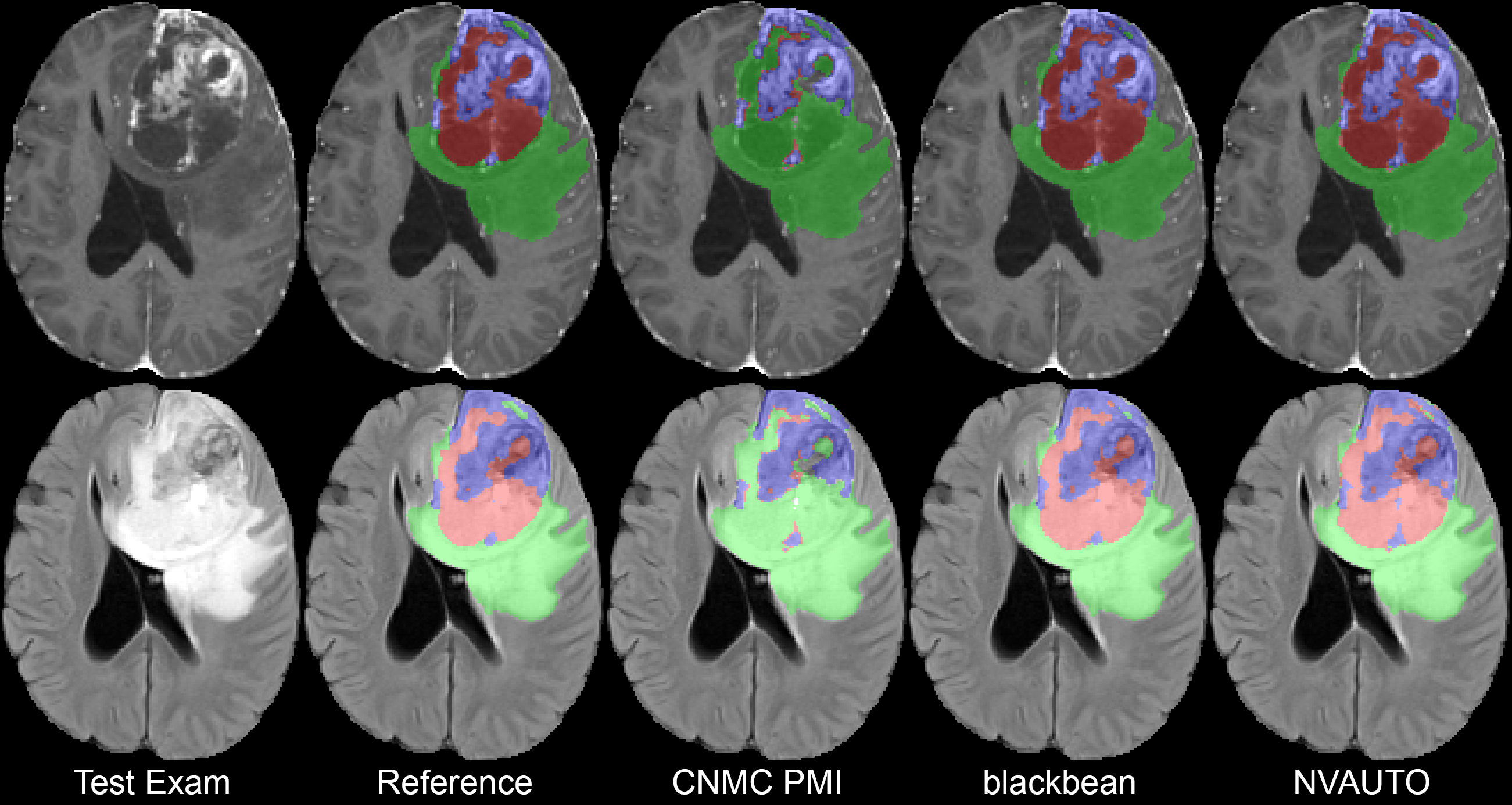

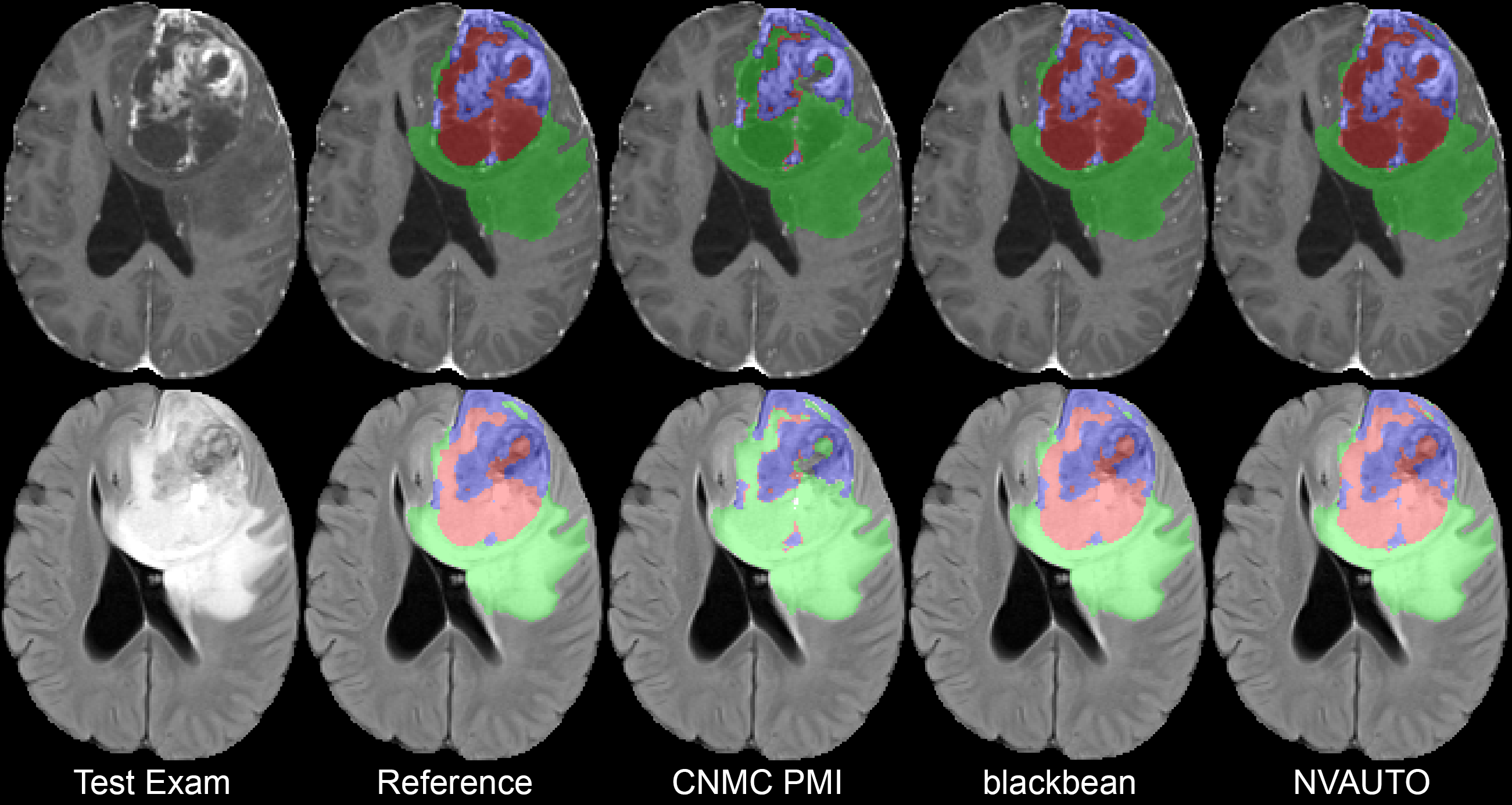

A total of 9 participating teams submitted automated segmentation models to MLCube for the BraTS Challenge 2023: Intracranial Meningioma. The statistical summary of the teams’ performances is outlined in Tables 2 and 3; which list the calculated DSCs and 95HD, respectively. The maximum recorded average DSC for ET, TC, and WT are 0.899, 0.904, and 0.871, respectively; and the minimum recorded average 95HD for ET, TC, and WT are 23.9, 21.8, and 31.4, respectively; highlighting the upper bounds of team performance within the challenge. The overall challenge summary statistics across all participating teams are listed in Table 4 for both DSC and 95HD. Figure 3 shows violin plots of DSC and 95HD scores for the ET, TC, and WT regions across all of the participating teams. Figure 4 shows a comparison of the predictions for a single testing set case from each of the top 3 participant’s algorithms.

| Train | Validation | Test | |

| Total Count | 1000 | 141 | 283 |

| Duke | 315 | 46 | 91 |

| TJU | 236 | 34 | 68 |

| Miss | 132 | 16 | 33 |

| Penn | 31 | 4 | 9 |

| UCSF | 126 | 18 | 35 |

| Yale | 160 | 23 | 47 |

| Release Date | May 2023 | June 2023 | Never released |

| Team Name | ET DSC | TC DSC | WT DSC | Rank (ET, TC, WT) |

|---|---|---|---|---|

| NVAUTO | 0.899 0.189 (0.976) | 0.904 0.180 (0.976) | 0.871 0.198 (0.964) | 1, 1, 1 |

| CNMC_PMI2023 | 0.876 0.217 (0.968) | 0.867 0.227 (0.968) | 0.851 0.231 (0.953) | 2, 3, 2 |

| blackbean | 0.870 0.222 (0.969) | 0.879 0.206 (0.969) | 0.845 0.226 (0.957) | 3, 2, 3 |

| Sherlock | 0.854 0.234 (0.958) | 0.850 0.239 (0.959) | 0.831 0.244 (0.945) | 4, 4, 4 |

| huilin | 0.830 0.276 (0.959) | 0.820 0.258 (0.958) | 0.761 0.297 (0.897) | 5, 5, 6 |

| i_sahajmistry | 0.799 0.291 (0.954) | 0.773 0.303 (0.949) | 0.764 0.296 (0.932) | 6, 7, 5 |

| Kurtlab-UW | 0.790 0.237 (0.896) | 0.774 0.250 (0.892) | 0.745 0.257 (0.872) | 7, 6, 8 |

| MIA | 0.775 0.305 (0.940) | 0.757 0.307 (0.941) | 0.751 0.306 (0.916) | 8, 8, 7 |

| UMNiverse | 0.007 0.084 (0.000) | 0.027 0.078 (0.006) | 0.241 0.290 (0.092) | 9, 9, 9 |

| Team Name | ET 95HD | TC 95HD | WT 95HD | Rank (ET, TC, WT) |

|---|---|---|---|---|

| NVAUTO | 23.9 68.5 (0.96) | 21.8 64.6 (1.0) | 31.4 71.8 (1.0) | 1, 1, 1 |

| CNMC_PMI2023 | 30.0 80.9 (1.0) | 31.7 83.5 (1.0) | 35.2 86.8 (1.62) | 2, 3, 2 |

| blackbean | 34.3 82.0 (1.0) | 29.9 75.0 (1.0) | 41.2 84.3 (1.0) | 3, 2, 4 |

| Sherlock | 34.3 87.5 (1.07) | 35.1 88.3 (1.93) | 39.7 93.1 (1.94) | 4, 4, 3 |

| Kurtlab-UW | 39.9 90.2 (2.0) | 45.9 95.8 (2.0) | 56.0 100.4 (1.05) | 5, 5, 6 |

| huilin | 46.9 104.2 (1.0) | 47.7 105.2 (1.41) | 55.9 106.9 (3.61) | 6, 6, 5 |

| i_sahajmistry | 56.5 108.9 (1.0) | 64.1 112.5 (1.41) | 66.2 111.0 (2.0) | 7, 8, 8 |

| MIA | 61.4 117.6 (1.41) | 61.6 118.0 (1.73) | 64.2 119.5 (2.83) | 8, 7, 7 |

| UMNiverse | 371.4 314.9 (374.0) | 150.1 151.5 (81.5) | 158.8 146.9 (189.1) | 9, 9, 9 |

| Statistic | ET DSC | TC DSC | WT DSC | ET 95HD | TC 95HD | WT 95HD |

|---|---|---|---|---|---|---|

| Average | 0.830 | 0.820 | 0.764 | 39.9 | 46.0 | 55.9 |

| Std | 0.234 | 0.239 | 0.257 | 87.5 | 95.76 | 100.4 |

| Median | 0.958 | 0.958 | 0.933 | 1.00 | 1.414 | 2.00 |

| (Q1, Q3) | [0.872, 0.981] | [0.850, 0.979] | [0.630, 0.972] | [1.00, 4.24] | [1.00, 6.27] | [1.00, 14.5] |

| Max Avg | 0.899 | 0.904 | 0.871 | 23.9 | 21.8 | 31.4 |

| Max Med | 0.976 | 0.976 | 0.964 | 1.00 | 1.00 | 1.00 |

The top 3 ranked teams for the BraTS Meningioma Challenge included NVAUTO, blackbean, and CNMC_PMI2023 (Capellan-Martin, 2023; Myronenko et al., 2023; Huang et al., 2023b). Each of these teams were invited to give an oral presentation of their findings and methods at MICCAI 2023 in Vancouver, Canada. Their lesion-wise DSC and 95HD and median lesion-wise DSC, averaged over ET, TC, and WT are listed in Table 5. NVAUTO’s winning MONAI Auto3DSeg framework and blackbean’s STU-Net framework are open-source and freely accessible (Apache License 2.0) (Myronenko et al., 2023; Myronenko, 2018; Consortium, 2020; Huang et al., 2023b, a). CNMC_PMI2023 utilized an ensemble of nnUNet, an open-source and freely accessible Apache License 2.0 model, and SWIN-transformer, a freely accessible MIT License model (Capellan-Martin, 2023). The team leaders, submitted short paper titles, and technical aspects of their algorithms are listed below:

- 1.

NVAUTO: Andriy Myronenko et al., Auto3DSeg for Brain Tumor Segmentation from 3D MRI in BraTS 2023 Challenge (Myronenko et al., 2023).

NVAUTO employed the Auto3DSeg tool from MONAI for brain tumor segmentation using 3D MRI scans (Myronenko, 2018; Consortium, 2020; Myronenko et al., 2023). The core of the model architecture used in the challenge was SegResNet, a U-Net based convolutional neural network designed for semantic segmentation tasks. This model utilizes an encoder-decoder structure, incorporating repeated ResNet blocks with batch normalization and deep supervision, which helps guide training through multiple layers of the network (Myronenko, 2018). To improve performance and robustness, several data augmentation techniques were applied, including random affine transformations, flipping, intensity scaling, shifting, noise addition, and blurring. These augmentations help the model generalize better by simulating variations in MRI data. The loss function used for training combined Dice loss and focal loss, with the goal of addressing class imbalance by emphasizing harder-to-segment areas and penalizing inaccurate segmentations of minority classes. Additionally, the loss was summed across deep-supervision sublevels, meaning the network computed losses at various resolution scales to refine the segmentation. The optimizer employed was AdamW, with an initial learning rate of , gradually reduced to zero using the cosine annealing scheduler. This adaptive optimization method, combined with a learning rate decay, ensures better convergence and prevents overfitting. Weight decay regularization of was also used to prevent overfitting by penalizing large weights in the model. The Auto3DSeg framework was designed to be user-friendly, requiring minimal manual input. It automates several stages of the training and optimization process, making it accessible even to non-experts. Advanced users can fine-tune various parameters, such as hyperparameters and model architecture, for improved performance. The training setup leveraged 8 NVIDIA V100 GPUs with 16 GB of memory each, and a 5-fold cross-validation process was used to ensure generalizability across different MRI datasets, further improving the model’s accuracy and robustness. - 2.

blackbean: Ziyan Huang et al., Evaluating STU-Net for Brain Tumor Segmentation (Huang et al., 2023b).

Blackbean utilized the Scalable and Transferable U-Net (STU-Net) model in the 2023 BraTS Challenge (Huang et al., 2023b, a). STU-Net builds upon the nnU-Net architecture but introduces key modifications to enhance its scalability and transferability for large-scale medical image segmentation tasks (Huang et al., 2023a; Isensee et al., 2021). The model’s architecture ranges from 14 million to 1.4 billion parameters, enabling flexibility depending on computational resources. The core innovation lies in the incorporation of residual connections to address gradient diffusion and downsampling blocks within each encoder stage for more efficient feature extraction. STU-Net also utilizes nearest-neighbor interpolation with a 1×1×1 convolution layer for upsampling, which improves the model’s ability to generalize and transfer learning across different imaging tasks (Huang et al., 2023a). A compound scaling strategy ensures balanced growth of both encoder and decoder components, optimizing both depth and width. Pre-trained on the TotalSegmentator dataset, which covers 104 foreground classes, the model demonstrates robust transferability to the BraTS brain tumor segmentation task (Wasserthal et al., 2022). Blackbean adhered to the default data pre-processing, data augmentation, and training procedures provided by nnU-Net, and they utilized the SGD optimizer with a Nesterov momentum of 0.99 and a weight decay of (Isensee et al., 2021). The batch size was fixed at 2, and each epoch consisted of 250 iterations. The learning rate decay followed the poly learning rate policy: . Data augmentation techniques used during training included additive brightness, gamma, rotation, scaling, mirror, and elastic deformation. The pre-training patch size on the TotalSegmentator dataset was 128 × 128 × 128 (Wasserthal et al., 2022). Fine-tuning patch sizes on downstream tasks were automatically configured by nnU-Net (Isensee et al., 2021). - 3.

CNMC_PMI2023: Daniel Capellán-Martín et al., Model Ensemble for Brain Tumor Segmentation in Magnetic Resonance Imaging (Capellan-Martin, 2023).

CNMC_PMI2023 used an ensemble-based approach. The ensemble strategy combines two state-of-the-art deep learning models: nnU-Net and Swin UNETR (Isensee et al., 2021; Tang et al., 2022). The 3D nnU-Net model was trained using five-fold cross-validation, with input images divided into patches of 128 x 160 x 112 (Isensee et al., 2021). The output consisted of three channels corresponding to the three tumor sub-regions. A combined Dice loss and cross-entropy loss function was employed, optimized using the stochastic gradient descent (SGD) algorithm with Nesterov momentum (learning rate: 0.01, momentum: 0.99, weight decay: ). Inference was conducted using a sliding window approach. The vision transformer-based 3D Swin UNETR model was trained using five-fold cross-validation, with input patches of 96 x 96 x 96 voxels (Tang et al., 2022). The output was four channels: three tumor sub-regions and background. The combined Dice loss and focal loss function was optimized using the AdamW optimizer (learning rate: 0.0001, momentum: 0.99, weight decay: ). To improve segmentation accuracy and robustness, predictions from nnU-Net and Swin UNETR were ensembled. The ensembling process involved combining outputs for the tumor regions (WT, TC, and ET) from each model across the five cross-validation folds. Given the task’s emphasis on lesion-wise evaluation, a post-processing step was developed to clean small disconnected regions ¡50 voxels. Training for nnU-Net models was conducted on an NVIDIA A100 GPU with 40GB of memory, while Swin UNETR models were trained on NVIDIA A5000 (24GB) and A6000 (48GB) GPUs. Hyperparameter optimization was carried out using the Optuna framework (Akiba et al., 2019).

| Average | Median | Average | |

|---|---|---|---|

| Team Name | DSC | DSC | 95HD |

| NVAUTO | 0.8909 | 0.9855 | 25.70 |

| Blackbean | 0.8643 | 0.9861 | 34.84 |

| CNMC_PMI2023 | 0.8638 | 0.9855 | 32.30 |

Of note, NVAUTO placed in the top 2 for each of the five distinct BraTS 2023 automated segmentation challenges. NVAUTO came in first place for Meningioma, BraTS-Africa, Brain Metastases; and came in second place for Adult Glioma and Pediatric Tumors. For the meningioma challenge, NVAUTO had a total of 228 of 283 testing phase cases with a tumor core DSC 0.90. Additionally, during the public validation phase, as described in their in-person oral presentation at MICCAI, NVAUTO reported achieving an average composite DSC of 0.935, which is substantially higher than their testing phase top score of 0.891, which suggests some degree of overfitting (Myronenko et al., 2023).

3.1 Notable Challenge Cases and Statistics

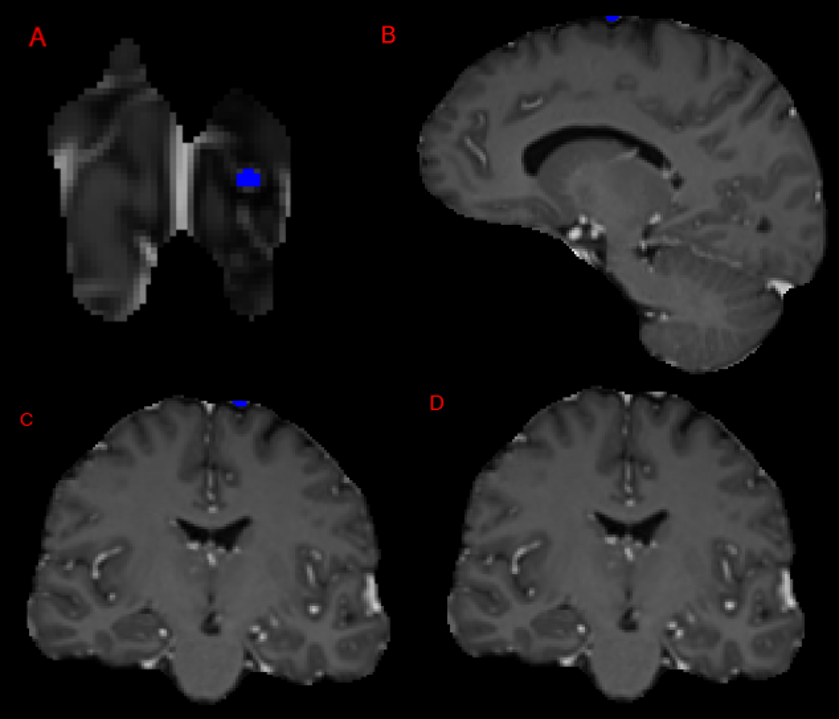

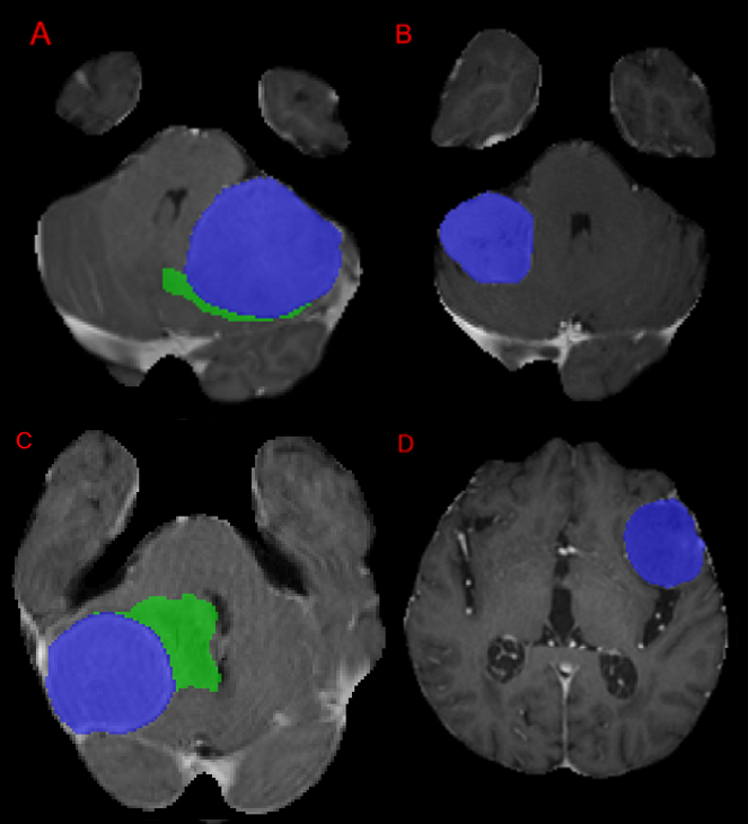

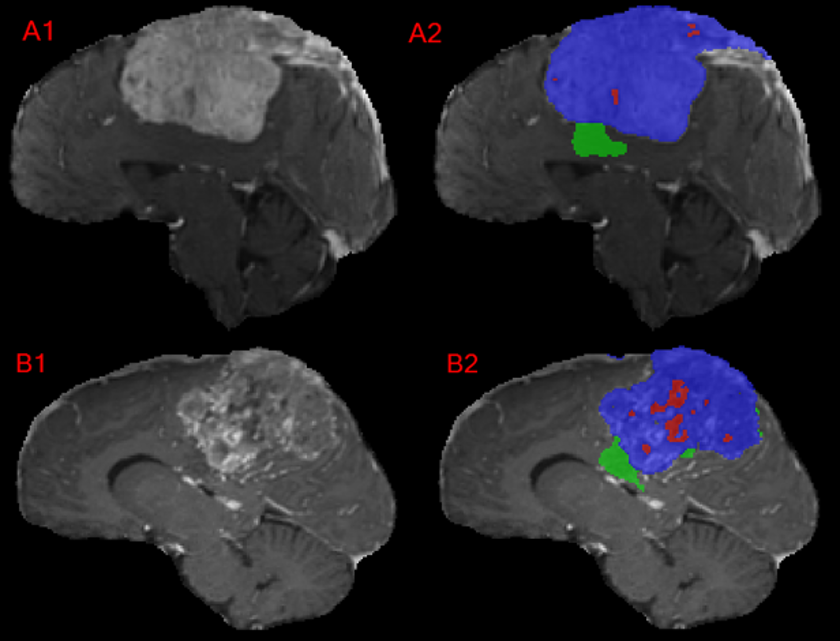

The top median DSC across all participants for a specific case was a perfect score of 1.00 for enhancing tumor and tumor core as shown in Figure 5. Note that there was a total of 33 enhancing tumor voxels, and 23 were abutting the edge of the MR image. The cases with the next highest overall median and average DSC are shown in Figure 6. Note that the ET volumes are qualitatively much greater in these particularly high DSC cases.

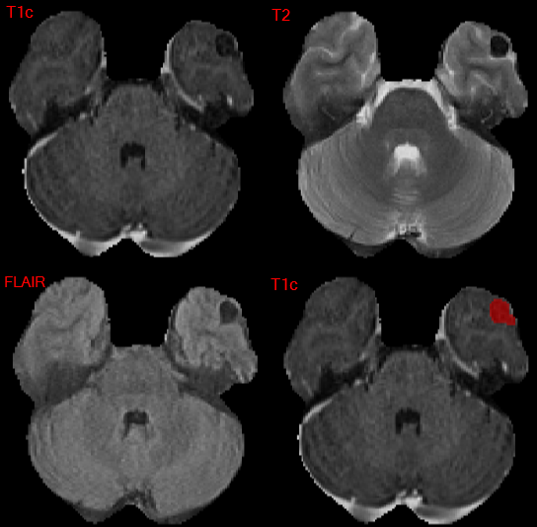

There were two meningioma cases with somewhat unusual imaging appearance as shown in Figures 7 and 8 which had poor performance for test performance metrics across all participants. Notably, they had a majority of non-enhancing tumor making up the TC and WT. These lesions correspond to heavily calcified lesions with little or no visible enhancement and low signal intensity on all provided sequences.

.

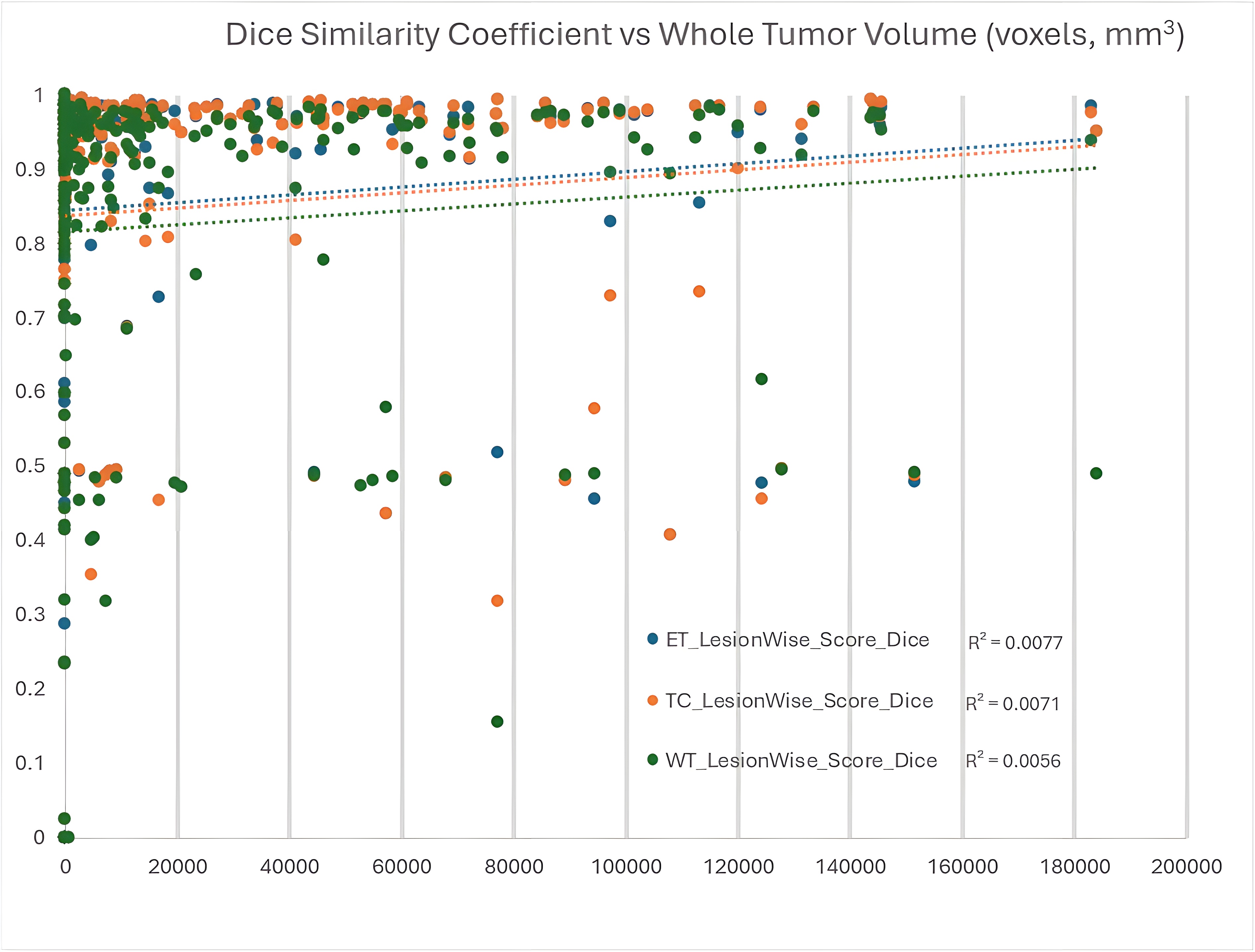

Each region of interest’s DSC was compared to WT volume for each respective case as shown in Figure 8. There was a nonsignificant positive linear correlation between DSC vs WT volume for each of the three regions of interest, ET, TC, and WT; with p values of 0.696, 0.689, and 0.741, respectively. There was a nonsignificant logarithmic correlation between DSC vs WT volume for each of the three regions of interest, ET, TC, and WT; with p values of 0.102, 0.093, and 0.200, respectively (not shown in figure). Notably, Figure 9 also demonstrates a significant number of cases with a lesion-wise DSC of approximately 0.5 for each of the regions of interest. This is due to the lesion-wise metrics penalizing false positives with a value of 0 for the respective prediction and false negatives with a value of 0 for missed ground truth lesions; combined with a very strong performance for another ground truth lesion.

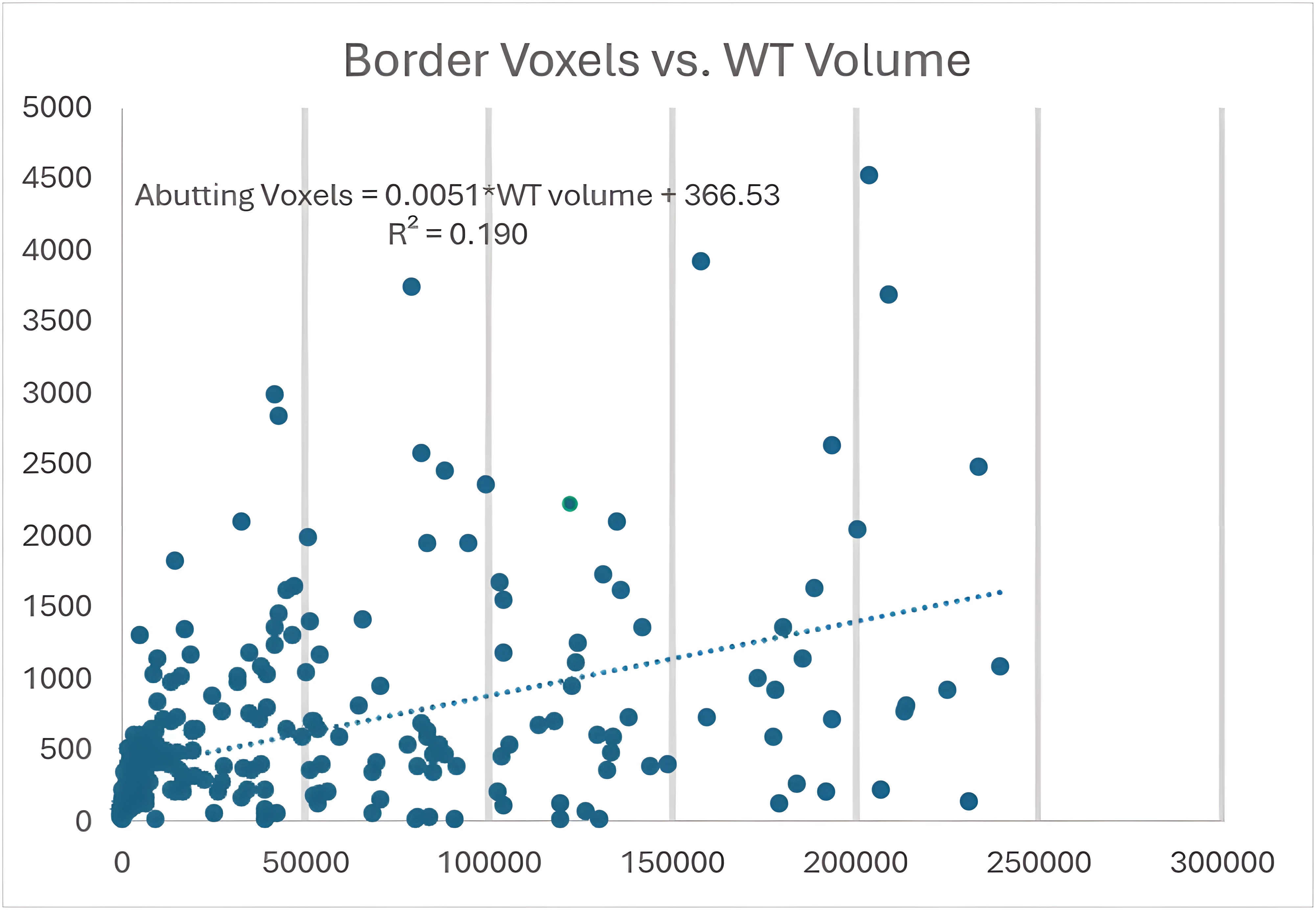

Skull-stripping resulted in 908 of 1000 training cases, 129 of 141 validation cases, and 257 of 283 test cases’ meningioma labels (1286 of 1424, 90.3% overall) having at least 1 compartment voxel abutting the edge of the skull-stripped image edge as seen in the example case in Figure 10. Of the 257 aforementioned cases, the average and median number of abutting voxels was 628.7 and 394 voxels respectively. Figure 11 shows the relationship between the number of abutting voxels and the WT volume ( = 0.190 and p = 0.002).

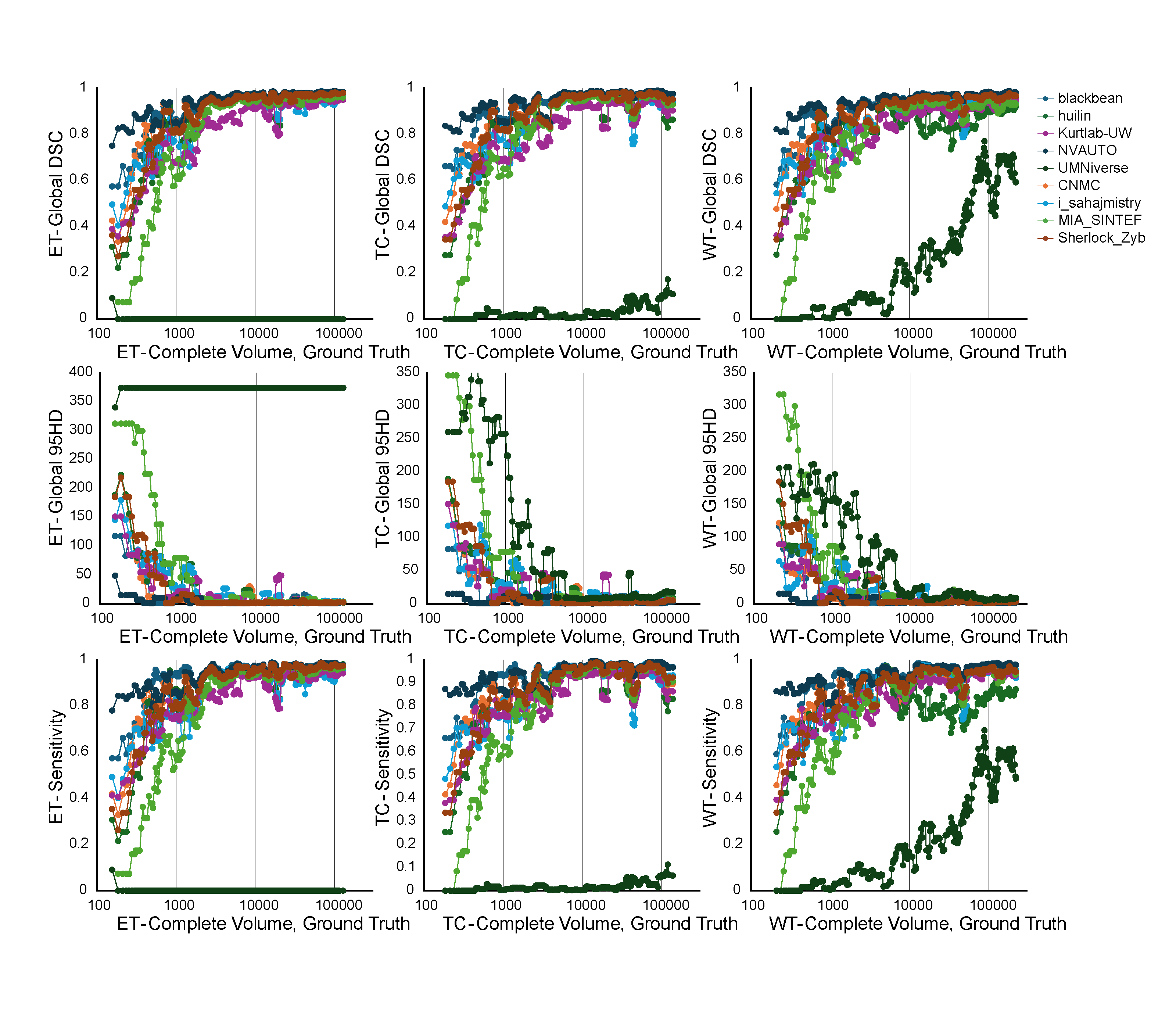

While global metrics (i.e. those used in previous BraTS challenges) were not used for ranking of the 2024 challenge, we have included Figure 12 to show the relationship between the complete tumor volume and each of the global metrics in cases with only a single lesion for each of the regions of interest. Note that there is a noticeable trend towards improved global DSC, global 95HD, and global sensitivity as complete volume increases.

4 Discussion

4.1 Challenge Summary

The BraTS 2023 Intracranial Meningioma Segmentation Challenge provided unprecedented insight into the state-of-the-art performance in pre-operative meningioma segmentation, leveraging the largest multi-institutional systematically expert annotated multilabel meningioma MRI dataset to date (Calabrese and LaBella, 2023; LaBella et al., 2024). The challenge saw remarkable performances, particularly from the NVAUTO team, in DSC and 95HD across ET, TC, and WT region of interest segmentation tasks. It was notable that DSC scores were, on average, higher for the meningioma challenge compared to all other BraTS 2023 segmentation sub-challenges, which may be due to the relatively lower complexity of meningiomas compared to other tumor types and/or the relatively high quality and consistency of the meningioma dataset. These lesion-wise DSC and 95HD scores should be considered as benchmarks for future pre-operative meningioma segmentation evaluation. Note that lesion-wise metrics are essential for segmentation tasks with potential for more than one distinct lesion as global metrics may still remain relatively high even if a single, smaller lesion is completely missed by the segmentation algorithm.

4.2 The Best Algorithm and Caveats

The superior performance of NVAUTO, with lesion-wise median DSC values of 0.976, 0.976, and 0.964 lesion-wise average DSC values of 0.899, 0.904, and 0.871, for ET, TC, and WT respectively, signifies a notable advancement in automated meningioma segmentation algorithms. These results not only demonstrate the feasibility of achieving high accuracy in meningioma segmentation, but also suggest that deep learning models can effectively adapt to the diverse morphology and anatomical locations of meningiomas. NVAUTO’s base algorithm, known as AutoSeg3D, an open-source and Pytorch-based framework, is particularly adaptable to a variety of medical imaging automated segmentation challenges (Myronenko et al., 2023). Auto3DSeg allows for auto-scaling to available GPUs; 5-fold training with SegResNet, DiNTS, and SwinUNETR models; and performing inference and ensembling using each of the multiple trained models. For the challenges, NVAUTO found the SegResNet model to be the most accurate and was ultimately the optimal model architecture selected by Auto3DSeg for each of the automated segmentation challenges (Myronenko et al., 2023). Additionally, Auto3DSeg, allows for use of a variety of image modalities and is not limited to just MRI. Auto3DSeg is advertised to run with a GPU RAM 8GB. However, for the 2023 BraTS challenges, NVAUTO used 8 x 16GB NVIDIA V100 machines (Myronenko et al., 2023). Future studies should assess the ability to use Auto3DSeg on more widely available, consumer grade GPUs and non-NVIDIA branded GPUs.

4.3 Overall Segmentation Performance

The challenge results revealed a broad range of performances among the 9 participating teams, with DSC scores for the ET ranging from 0.899 to 0.007. Such variability underscores the challenge’s complexity and the diverse approaches to segmentation. When comparing NVAUTO’s meningioma segmentation performance compared to their performance on other challenges, it was found that they performed the best on meningioma’s DSC for ET and TC regions of interest but performed only second best for DSC for WT as shown in Table 6. NVAUTO’s algorithm showed that Sub-Saharan Africa gliomas had a higher DSC for WT than all other tumor types. Since meningioma tend to have a smaller overall whole tumor volume and a higher ET or TC to WT ratio compared to glioblastoma, it can be hypothesized that there is less available training information to create as accurate SNFH compartment labels; and thereafter, the WT region of interest (Ogasawara et al., 2021; Gilard et al., 2021; Khandwala et al., 2021).

| ET | TC | WT | |

|---|---|---|---|

| (Avg DSC) | (Avg DSC) | (Avg DSC) | |

| Meningioma | 0.899 | 0.904 | 0.870 |

| Glioma | 0.810 | 0.830 | 0.840 |

| Sub-Saharan | 0.790 | 0.840 | 0.910 |

| Africa | |||

| Metastasis | 0.600 | 0.650 | 0.620 |

| Pediatric | 0.550 | 0.780 | 0.840 |

4.4 Limitations of the BraTS Meningioma Benchmark

Our analysis revealed that all participating teams performed relatively poorly on heavily calcified meningiomas with little or no enhancing tumor. For example, teams had an average TC DSC of only 0.156 for one particular non-enhancing meningioma case. This relatively poor performance is presumably related to the relative rarity of this imaging appearance of meningioma, and the fact that only a small number of such cases were included in the training dataset. Future datasets should include more cases of exclusively non-enhancing tumor to allow for more generalizable automated segmentation models. It is important to consider that radiotherapy plans only consider a single GTV represented by the TC, and whether these indeterminate TC regions are labeled as non-enhancing vs enhancing regions would not impact the resulting treatment volumes (Rogers et al., 2017, 2020).

Note that in Figure 9, the lines of best fit for ET, TC, and WT trend towards improved DSC with increased WT volume. In an automated segmentation challenge, this could cause a higher perceived test set overall DSC score if a larger proportion of larger tumors are included in the test set compared to the overall population. Therefore, it is important to ensure balance within the training, validation, and test sets regarding tumor size, which was not explicitly done for this iteration of the challenge.

Another notable limitation of our study is the absence of explicit testing on out-of-distribution (OOD) cases. While our model demonstrated strong performance on the provided BraTS 2023 meningioma dataset, all data were derived from a limited number of institutions, and no evaluation was performed on data from external sources or significantly different MRI acquisition protocols. Consequently, the generalization ability of the model to cases from different populations, MRI machines, or acquisition settings remains untested. Future work should focus on assessing the model’s performance on OOD data to ensure its robustness and applicability in real-world clinical environments. Techniques such as domain adaptation and cross-institutional validation will be crucial to improve the model’s generalization capabilities and reliability across diverse clinical settings.

4.5 Future Directions

Future studies involving meningioma automated segmentation should focus on the most important clinical volumes, particularly the tumor core which comprises the radiotherapy gross tumor volume (GTV). Additionally, future studies should focus on the segmentation of meningioma along the intra-axial and extra-axial border with various face anonymization pre-processing techniques, due to the high frequency for meningioma to be excluded by skull-stripping as demonstrated by our results (Watts et al., 2014; Schwarz et al., 2019, 2021).

This study performed an analysis of the propensity of meningioma to abut the skull-stripped image, thereby having the potential to exclude portions of the meningioma within the skull-stripped image. Due to skull-stripping resulting in 1286 of 1483 meningioma having at least 1 compartment voxel abutting the boundary of the skull-stripped image, future studies should evaluate a different pre-processing anonymization technique that will allow for inclusion of more volume of intracranial meningioma. Schwarz et al. describes a promising mri_reface technique that performs face anonymization by modifying the MR head image face and ear regions to represent an average human face and ear, thereby preserving the vast majority of the MR head image (Schwarz et al., 2019, 2021). Bischoff et al. describes mri_deface, a defacing tool that removes facial features by assigns a probability of voxel being “face” or “brain” and removes voxels that have non-zero probability of being “face” but zero probability of being “brain” (Theyers et al., 2021; Bischoff-Grethe et al., 2007).

Furthermore, while current segmentation approaches predominantly utilize MRI, atypical meningiomas—such as those that are completely non-enhancing or heavily calcified—remain challenging to automate segmentation due to low signal intensity and limited contrast. In these cases, integrating additional imaging modalities could prove highly beneficial. PET imaging, especially with tracers like 68Ga-DOTATATE, provides metabolic information that can help differentiate viable tumor tissue from calcified or fibrotic areas (Prasad et al., 2022). Likewise, CT offers superior spatial resolution for delineating calcifications and osseous involvement, which is critical when meningiomas extend into or invade bone (Salah et al., 2019). Indeed, studies have demonstrated that PET and CT can outperform MRI in identifying bony invasion and calcification in meningiomas (Galldiks et al., 2023; Salah et al., 2019). Thus, the incorporation of multi-modal imaging may lead to more robust segmentation algorithms capable of addressing the full spectrum of meningioma presentations.

RTOG 0539, a phase II trial of observation for low-risk meningiomas and of radiotherapy for intermediate- and high-risk meningiomas, describes radiation treatment planning and target volume protocols that should be used for meningiomas (Rogers et al., 2017, 2020). For radiation planning, they only required use of pre-operative and post-operative contrast-enhanced MRI. RTOG 0539 defines the GTV to encompass the tumor bed on postoperative-enhanced MRI and to include any residual nodular enhancement. They also state that trailing linear dural tail and cerebral edema should not be specifically included within the GTV, since there is no evidence that recurrence is more likely within the dural tail (Rogers et al., 2017, 2020).

Therefore, future studies that focus on automated segmentation of meningioma for radiation therapy planning should place emphasis on evaluation of the enhancing tumor and post-op resection bed volumes on post-operative T1Gd treatment planning MRI, while reducing emphasis on the surrounding non-enhancing FLAIR hyperintensity compartment and the small trailing linear dural tail enhancement.

However, in the post-treatment follow-up setting, RTOG 0539 still required the use of T1, T2, FLAIR, and T1Gd series, which is similar to the imaging used in the BraTS 2023 Challenge: Intracranial Meningioma. For this challenge’s pre-operative meningioma dataset, the enhancing and non-enhancing tumor labels compose the tumor-core. The SNFH label representing edema, which was included in the WT region of interest is not typically included within radiation therapy meningioma target volumes.

5 Conclusion

The BraTS 2023 Intracranial Meningioma Segmentation Challenge has marked a significant step forward in the segmentation of meningioma tumors, highlighting both the potential and limitations of current methodologies. As the field moves forward, a focus on enhancing dataset diversity, refining pre-processing techniques, and tailoring segmentation tasks to specific clinical needs will be crucial in translating these advancements into clinical practice.

Acknowledgments

Research reported in this publication was partly supported by the National Institutes of Health (NIH) under award numbers: NCI K08CA256045, NCI/ITCR U24CA279629, and NCI/ITCR U01CA242871. The content of this publication is solely the responsibility of the authors and does not represent the official views of the NIH.

Developing large and well curated mpMRI datasets for automated segmentation model development requires significant time and expertise from neuro-radiology experts. We are grateful to everyone who contributed to the development and review of the tumor volume labels including volunteer annotators/approvers from the American Society of Neuroradiology.

Ethical Standards

The work follows appropriate ethical standards in conducting research and writing the manuscript, following all applicable laws and regulations regarding treatment of animals and human subjects. All participating sites had institutional review board (IRB) approval. A waiver for informed consent was provided by each institution’s respective IRB.

Conflicts of Interest

We declare we do not have conflicts of interest.

Data availability

The BraTS Meningioma Pre-operative Dataset training (1,000/1,424, 70%) and validation (141/1,424, 10%) data are publicly available on Synapse (Calabrese and LaBella, 2023; LaBella et al., 2024). The testing dataset (283/1,424, 20%) will be kept private for the foreseeable future to allow for the unbiased assessment of future segmentation algorithms.

References

- bra (2023) Synapse: Brain tumor segmentation (brats) cluster of challenges. https://www.synapse.org/#!Synapse:syn51156910/wiki/, 2023.

- Akiba et al. (2019) Takuya Akiba, Shotaro Sano, Toshihiko Yanase, Takeru Ohta, and Masanori Koyama. Optuna: A next-generation hyperparameter optimization framework. In Proceedings of the 25th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 2019.

- Baid et al. (2021) Ujjwal Baid, Satyam Ghodasara, Suyash Mohan, Michel Bilello, Evan Calabrese, Errol Colak, Keyvan Farahani, Jayashree Kalpathy-Cramer, Felipe C Kitamura, Sarthak Pati, et al. The rsna-asnr-miccai brats 2021 benchmark on brain tumor segmentation and radiogenomic classification. arXiv preprint arXiv:2107.02314, 2021.

- Bakas et al. (2017) Spyridon Bakas, Hamed Akbari, Aristeidis Sotiras, Michel Bilello, Martin Rozycki, Justin S Kirby, John B Freymann, Keyvan Farahani, and Christos Davatzikos. Advancing the cancer genome atlas glioma mri collections with expert segmentation labels and radiomic features. Scientific data, 4(1):1–13, 2017.

- Bischoff-Grethe et al. (2007) Amanda Bischoff-Grethe, I Burak Ozyurt, Evelina Busa, Brian T Quinn, Christine Fennema-Notestine, Camellia P Clark, Shaunna Morris, Mark W Bondi, Terry L Jernigan, Anders M Dale, et al. A technique for the deidentification of structural brain mr images. Human brain mapping, 28(9):892–903, 2007.

- Bouget et al. (2022) David Bouget, André Pedersen, Asgeir S Jakola, Vasileios Kavouridis, Kyrre E Emblem, Roelant S Eijgelaar, Ivar Kommers, Hilko Ardon, Frederik Barkhof, Lorenzo Bello, et al. Preoperative brain tumor imaging: Models and software for segmentation and standardized reporting. Frontiers in neurology, 13:932219, 2022.

- Calabrese and LaBella (2023) E. Calabrese and D. LaBella. 2023 brats meningioma dataset. https://www.synapse.org/#!Synapse:syn51514106, 2023.

- Capellan-Martin (2023) D. Capellan-Martin. Brats challenge 2023: Model ensemble for brain tumor segmentation in mri. In MICCAI, Vancouver, Canada, 2023.

- Consortium (2020) The MONAI Consortium. Project monai. https://doi.org/10.5281/zenodo.4323059, 2020.

- Galldiks et al. (2023) Norbert Galldiks, Nathalie L Albert, Michael Wollring, Jan-Michael Werner, Philipp Lohmann, Javier E Villanueva-Meyer, Gereon R Fink, Karl-Josef Langen, and Joerg-Christian Tonn. Advances in pet imaging for meningioma patients. Neuro-oncology advances, 5(Supplement_1):i84–i93, 2023.

- Gilard et al. (2021) Vianney Gilard, Abdellah Tebani, Ivana Dabaj, Annie Laquerrière, Maxime Fontanilles, Stéphane Derrey, Stéphane Marret, and Soumeya Bekri. Diagnosis and management of glioblastoma: A comprehensive perspective. Journal of personalized medicine, 11(4):258, 2021.

- Gordillo et al. (2013) Nelly Gordillo, Eduard Montseny, and Pilar Sobrevilla. State of the art survey on mri brain tumor segmentation. Magnetic resonance imaging, 31(8):1426–1438, 2013.

- Havaei et al. (2017) Mohammad Havaei, Axel Davy, David Warde-Farley, Antoine Biard, Aaron Courville, Yoshua Bengio, Chris Pal, Pierre-Marc Jodoin, and Hugo Larochelle. Brain tumor segmentation with deep neural networks. Medical image analysis, 35:18–31, 2017.

- Huang et al. (2023a) Z. Huang, H. Wang, Z. Deng, J. Ye, Y. Su, H. Sun, J. He, Y. Gu, L. Gu, and S. Zhang. Stu-net: Scalable and transferable medical image segmentation models empowered by large-scale supervised pre-training. arXiv preprint arXiv:2304.06716, 2023a.

- Huang et al. (2023b) Z. Huang et al. Exploring model size and patch size for brats23 challenge. In MICCAI, Vancouver, Canada, 2023b.

- Isensee et al. (2021) Fabian Isensee, Paul F Jaeger, Simon AA Kohl, Jens Petersen, and Klaus H Maier-Hein. nnu-net: a self-configuring method for deep learning-based biomedical image segmentation. Nature methods, 18(2):203–211, 2021.

- Işın et al. (2016) Ali Işın, Cem Direkoğlu, and Melike Şah. Review of mri-based brain tumor image segmentation using deep learning methods. Procedia Computer Science, 102:317–324, 2016.

- Juluru et al. (2020) Krishna Juluru, Eliot Siegel, and Jan Mazura. Identification from mri with face-recognition software. The New England Journal of Medicine, 382(5):489–490, 2020.

- Karargyris et al. (2023) Alexandros Karargyris, Renato Umeton, Micah J Sheller, Alejandro Aristizabal, Johnu George, Anna Wuest, Sarthak Pati, Hasan Kassem, Maximilian Zenk, Ujjwal Baid, et al. Federated benchmarking of medical artificial intelligence with medperf. Nature Machine Intelligence, 5(7):799–810, 2023.

- Kazerooni et al. (2023) Anahita Fathi Kazerooni, Nastaran Khalili, Xinyang Liu, Debanjan Haldar, Zhifan Jiang, Syed Muhammed Anwar, Jake Albrecht, Maruf Adewole, Udunna Anazodo, Hannah Anderson, et al. The brain tumor segmentation (brats) challenge 2023: Focus on pediatrics (cbtn-connect-dipgr-asnr-miccai brats-peds). ArXiv, 2023.

- Khandwala et al. (2021) Kumail Khandwala, Fatima Mubarak, and Khurram Minhas. The many faces of glioblastoma: Pictorial review of atypical imaging features. The Neuroradiology Journal, 34(1):33–41, 2021.

- LaBella (2024) Dominic LaBella. MeningiomaAnalysis. https://doi.org/10.5281/zenodo.13936365, 2024.

- LaBella et al. (2023) Dominic LaBella, Maruf Adewole, Michelle Alonso-Basanta, Talissa Altes, Syed Muhammad Anwar, Ujjwal Baid, Timothy Bergquist, Radhika Bhalerao, Sully Chen, Verena Chung, et al. The asnr-miccai brain tumor segmentation (brats) challenge 2023: Intracranial meningioma. arXiv preprint arXiv:2305.07642, 2023.

- LaBella et al. (2024) Dominic LaBella, Omaditya Khanna, Shan McBurney-Lin, Ryan Mclean, Pierre Nedelec, Arif S. Rashid, Nourel hoda Tahon, Talissa Altes, Ujjwal Baid, Radhika Bhalerao, Yaseen Dhemesh, Scott Floyd, Devon Godfrey, Fathi Hilal, Anastasia Janas, Anahita Kazerooni, Collin Kent, John Kirkpatrick, Florian Kofler, Kevin Leu, Nazanin Maleki, Bjoern Menze, Maxence Pajot, Zachary J. Reitman, Jeffrey D. Rudie, Rachit Saluja, Yury Velichko, Chunhao Wang, Pranav I. Warman, Nico Sollmann, David Diffley, Khanak K. Nandolia, Daniel I Warren, Ali Hussain, John Pascal Fehringer, Yulia Bronstein, Lisa Deptula, Evan G. Stein, Mahsa Taherzadeh, Eduardo Portela de Oliveira, Aoife Haughey, Marinos Kontzialis, Luca Saba, Benjamin Turner, Melanie M. T. Brüßeler, Shehbaz Ansari, Athanasios Gkampenis, David Maximilian Weiss, Aya Mansour, Islam H. Shawali, Nikolay Yordanov, Joel M. Stein, Roula Hourani, Mohammed Yahya Moshebah, Ahmed Magdy Abouelatta, Tanvir Rizvi, Klara Willms, Dann C. Martin, Abdullah Okar, Gennaro D’Anna, Ahmed Taha, Yasaman Sharifi, Shahriar Faghani, Dominic Kite, Marco Pinho, Muhammad Ammar Haider, Michelle Alonso-Basanta, Javier Villanueva-Meyer, Andreas M. Rauschecker, Ayman Nada, Mariam Aboian, Adam Flanders, Spyridon Bakas, and Evan Calabrese. A multi-institutional meningioma mri dataset for automated multi-sequence image segmentation. Scientific Data, 11:496, 2024. . URL https://doi.org/10.1038/s41597-024-03350-9.

- Martz et al. (2022) N Martz, J Salleron, F Dhermain, G Vogin, JF Daisne, R Mouttet Audouard, R Tanguy, G Noel, M Peyre, I Lecouillard, et al. Anocef consensus guideline on target volume delineation for meningiomas radiotherapy. International Journal of Radiation Oncology, Biology, Physics, 114(3):e46, 2022.

- Menze et al. (2014) Bjoern H Menze, Andras Jakab, Stefan Bauer, Jayashree Kalpathy-Cramer, Keyvan Farahani, Justin Kirby, Yuliya Burren, Nicole Porz, Johannes Slotboom, Roland Wiest, et al. The multimodal brain tumor image segmentation benchmark (brats). IEEE transactions on medical imaging, 34(10):1993–2024, 2014.

- MLCommons Association (2024) MLCommons Association. Mlcube: Standardizing ml deployment. https://mlcommons.org/working-groups/data/mlcube/, 2024. Accessed: 2024-05-08.

- Moawad et al. (2023) Ahmed W Moawad, Anastasia Janas, Ujjwal Baid, Divya Ramakrishnan, Leon Jekel, Kiril Krantchev, Harrison Moy, Rachit Saluja, Klara Osenberg, Klara Wilms, et al. The brain tumor segmentation (brats-mets) challenge 2023: Brain metastasis segmentation on pre-treatment mri. ArXiv, 2023.

- Murek (2024) Michael Murek. Localization of intracranial meningiomas, 2024. URL https://neurochirurgie.insel.ch/en/what-we-treat/brain-tumor/meningioma. Image: University Department of Neurosurgery, Inselspital Bern © CC BY-NC 4.0. Left: Axial view of meningiomas at the base of the skull. Right: Coronal view of meningiomas with location at the cranial dome, the falx cerebri as well as intraventricular.

- Myronenko et al. (2023) A. Myronenko, D. Yang, Y. He, and D. Xu. Auto3dseg for brain tumor segmentation from 3d mri in brats 2023 challenge. In MICCAI, Vancouver, Canada, 2023.

- Myronenko (2018) Andriy Myronenko. 3d mri brain tumor segmentation using autoencoder regularization. In International MICCAI Brainlesion Workshop, pages 311–320. Springer, Cham, 2018.

- Ogasawara et al. (2021) Christian Ogasawara, Brandon D Philbrick, and D Cory Adamson. Meningioma: a review of epidemiology, pathology, diagnosis, treatment, and future directions. Biomedicines, 9(3):319, 2021.

- Pati et al. (2022) Sarthak Pati, Ujjwal Baid, Brandon Edwards, Micah J Sheller, Patrick Foley, G Anthony Reina, Siddhesh Thakur, Chiharu Sako, Michel Bilello, Christos Davatzikos, et al. The federated tumor segmentation (fets) tool: an open-source solution to further solid tumor research. Physics in Medicine & Biology, 67(20):204002, 2022.

- Pati et al. (2023) Sarthak Pati, Siddhesh P Thakur, İbrahim Ethem Hamamcı, Ujjwal Baid, Bhakti Baheti, Megh Bhalerao, Orhun Güley, Sofia Mouchtaris, David Lang, Spyridon Thermos, et al. Gandlf: the generally nuanced deep learning framework for scalable end-to-end clinical workflows. Communications Engineering, 2(1):23, 2023.

- Pereira et al. (2016) Sérgio Pereira, Adriano Pinto, Victor Alves, and Carlos A Silva. Brain tumor segmentation using convolutional neural networks in mri images. IEEE transactions on medical imaging, 35(5):1240–1251, 2016.

- Prasad et al. (2022) Rahul N Prasad, Haley K Perlow, Joseph Bovi, Steve E Braunstein, Jana Ivanidze, John A Kalapurakal, Christopher Kleefisch, Jonathan PS Knisely, Minesh P Mehta, Daniel M Prevedello, et al. 68ga-dotatate pet: the future of meningioma treatment. International Journal of Radiation Oncology, Biology, Physics, 113(4):868–871, 2022.

- Rogers et al. (2020) C Leland Rogers, Minhee Won, Michael A Vogelbaum, Arie Perry, Lynn S Ashby, Jignesh M Modi, Anthony M Alleman, James Galvin, Shannon E Fogh, Emad Youssef, et al. High-risk meningioma: initial outcomes from nrg oncology/rtog 0539. International Journal of Radiation Oncology* Biology* Physics, 106(4):790–799, 2020.

- Rogers et al. (2017) Leland Rogers, Peixin Zhang, Michael A Vogelbaum, Arie Perry, Lynn S Ashby, Jignesh M Modi, Anthony M Alleman, James Galvin, David Brachman, Joseph M Jenrette, et al. Intermediate-risk meningioma: initial outcomes from nrg oncology rtog 0539. Journal of neurosurgery, 129(1):35–47, 2017.

- Rudie (2023) J. Rudie. Brats 2023 segmentation metrics: Clinical relevance. In Proceedings of the Medical Image Computing and Computer Assisted Intervention – MICCAI, Vancouver, Canada, 2023.

- Salah et al. (2019) Florian Salah, A Tabbarah, K Asmar, H Tamim, M Makki, A Sibahi, R Hourani, et al. Can ct and mri features differentiate benign from malignant meningiomas? Clinical Radiology, 74(11):898–e15, 2019.

- Saluja (2023) R. Saluja. Lesion-wise performance metrics for brats-2023 segmentation challenges. In Proceedings of the Medical Image Computing and Computer Assisted Intervention – MICCAI, Vancouver, Canada, 2023.

- Schwarz et al. (2019) Christopher G Schwarz, Walter K Kremers, Terry M Therneau, Richard R Sharp, Jeffrey L Gunter, Prashanthi Vemuri, Arvin Arani, Anthony J Spychalla, Kejal Kantarci, David S Knopman, et al. Identification of anonymous mri research participants with face-recognition software. New England Journal of Medicine, 381(17):1684–1686, 2019.

- Schwarz et al. (2021) Christopher G Schwarz, Walter K Kremers, Heather J Wiste, Jeffrey L Gunter, Prashanthi Vemuri, Anthony J Spychalla, Kejal Kantarci, Aaron P Schultz, Reisa A Sperling, David S Knopman, et al. Changing the face of neuroimaging research: comparing a new mri de-facing technique with popular alternatives. NeuroImage, 231:117845, 2021.

- Smith (2002) Stephen M Smith. Fast robust automated brain extraction. Human brain mapping, 17(3):143–155, 2002.

- Tang et al. (2022) Yucheng Tang, Dong Yang, Wenqi Li, Holger R. Roth, et al. Self-supervised pre-training of swin transformers for 3d medical image analysis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pages 20730–20740, 2022.

- Thakur et al. (2020) Siddhesh Thakur, Jimit Doshi, Sarthak Pati, Saima Rathore, Chiharu Sako, Michel Bilello, Sung Min Ha, Gaurav Shukla, Adam Flanders, Aikaterini Kotrotsou, et al. Brain extraction on mri scans in presence of diffuse glioma: Multi-institutional performance evaluation of deep learning methods and robust modality-agnostic training. NeuroImage, 220:117081, 2020.

- Thakur et al. (2019) Siddhesh P Thakur, Jimit Doshi, Sarthak Pati, Sung Min Ha, Chiharu Sako, Sanjay Talbar, Uday Kulkarni, Christos Davatzikos, Guray Erus, and Spyridon Bakas. Skull-stripping of glioblastoma mri scans using 3d deep learning. In International MICCAI Brainlesion Workshop, pages 57–68. Springer, 2019.

- Theyers et al. (2021) Athena E Theyers, Mojdeh Zamyadi, Mark O’Reilly, Robert Bartha, Sean Symons, Glenda M MacQueen, Stefanie Hassel, Jason P Lerch, Evdokia Anagnostou, Raymond W Lam, et al. Multisite comparison of mri defacing software across multiple cohorts. Frontiers in psychiatry, 12:617997, 2021.

- Wasserthal et al. (2022) J. Wasserthal, M. Meyer, H.C. Breit, J. Cyriac, S. Yang, and M. Segeroth. Totalsegmentator: robust segmentation of 104 anatomical structures in ct images. arXiv preprint arXiv:2208.05868, 2022.

- Watts et al. (2014) J Watts, G Box, A Galvin, P Brotchie, N Trost, and T Sutherland. Magnetic resonance imaging of meningiomas: a pictorial review. Insights into imaging, 5(1):113–122, 2014.

- Yushkevich et al. (2006) Paul A. Yushkevich, Joseph Piven, Heather Cody Hazlett, Rachel Gimpel Smith, Sean Ho, James C. Gee, and Guido Gerig. User-guided 3D active contour segmentation of anatomical structures: Significantly improved efficiency and reliability. Neuroimage, 31(3):1116–1128, 2006.